Operator Hypotheses #5: Patronus AI

Testing how authority-driven services gravity determines outcomes in AI evaluation.

Started: February 2026 (Month 29 post-seed, Month 21 post-Series A)

Check back: February 2027

Confidence Level: 60-70% (packaging + hiring are observable, but public narrative can lag reality by a quarter)

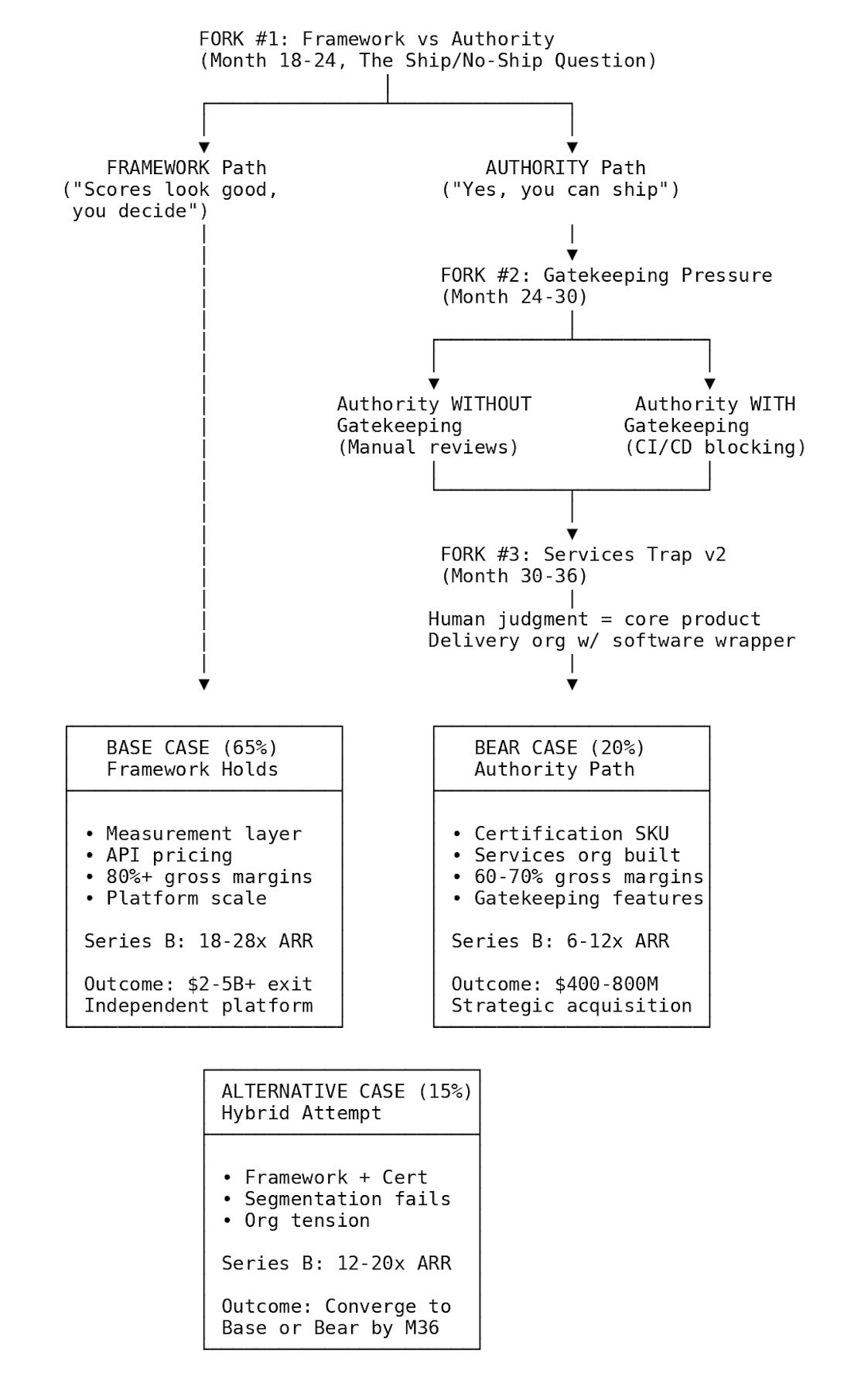

TL;DR: The fork is Framework vs Authority. If Patronus takes ship/no-ship responsibility, services gravity follows via gatekeeping. We’ll track five public signals monthly to see which path they take.

About This Series

Operator Hypotheses tests whether execution forks in startups are predictable from public signals. Each entry follows one company over 12 months to see whether repeatable patterns appear at predictable moments.

Pattern #5 focuses on authority-driven services gravity: when taking responsibility for safety decisions turns evaluation software into high-touch delivery. This extends Pattern #2 (DatologyAI’s services trap) into a variant where human judgment becomes the core product.

See Pattern #0 for the complete framework.

Why Patronus

Patronus AI builds evaluation and optimization tools for AI systems—helping companies measure hallucinations, test model performance, and assess deployment readiness. The company emerged from stealth in September 2023 with a $3M seed round.

At Month 29 post-seed (21 months post-Series A), Patronus sits in a category where this fork tends to surface early.

They’re already positioned as a framework company (benchmarks + OSS models), which is exactly the posture that gets tested when a large customer asks for a ship/no-ship answer. By the time of the $17M Series A (May 22, 2024), Patronus was already working with customers like OpenAI and HP—enterprises with real deployment risk, which increases the odds they’ll be asked for an explicit ship/no-ship attestation. Research output is strong: FinanceBench (November 16, 2023), Lynx (July 11, 2024), Glider (December 19, 2024) show academic credibility and open-source commitment.

Recent product launches reinforce framework expansion. In December 2025, Patronus introduced Generative Simulators—adaptive training environments for AI agents that go beyond static benchmarks. Other 2025 releases include Percival Chat (an eval copilot), MEMTRACK (agent memory benchmark), and Prompt Tester. All represent deepening within the evaluation/optimization domain, not a shift toward authority products.

The company’s blog notes 15x revenue growth in 2025 from an early base, suggesting framework economics are working. This strengthens the framework baseline—but also increases the likelihood that large customers will feel confident enough to ask Patronus to take deployment responsibility.

But positioning at a constraint doesn’t prevent execution traps. In adjacent categories—infrastructure monitoring (Datadog) and application security (Veracode)—those that stayed 'tools' maintained clean economics and scaled to platform outcomes; those that became 'authorities' faced services-heavy delivery and strategic exits rather than independent scale.

If Patronus follows the common pattern for evaluation-adjacent companies, the period through February 2027 will determine whether they maintain framework positioning or accept authority responsibility—and whether authority leads to services gravity.

The Question You Can’t Say No To

You’re 18 months into building an AI evaluation platform. A Fortune 500 customer has been using your tools for months. Scores look solid. Hallucination rates are down. Their AI team trusts your measurements.

Then the VP of Engineering calls:

“We’re ready to deploy this chatbot at scale. We’ve run all your evaluations. The scores look good. So... are we okay to ship?”

Three months later, a hallucination makes headlines—and legal asks who signed off.

Say yes, and you’ve taken responsibility for deployment. If something breaks, your company’s name is attached. You’re no longer providing tools. You’re providing judgment.

Say “the scores suggest low risk, but you make the final call,” and the framework model holds. The customer decides. Your tools inform, but liability stays with them.

Then the follow-ups arrive.

“Can you sign off on this for our board?”

“Can we say ‘validated by Patronus’ in the risk memo?”

“Will you attend the deployment review?”

Each request inches the company from measurement layer to authority.

Once one customer gets approval, the next ten ask for it. And approval requires humans—your humans—reviewing specific use cases, interpreting edge cases, and absorbing context. Evaluation stops being a product. It becomes a service.

This fork determines whether Patronus stays infrastructure—or becomes a high-trust delivery org with software wrapped around it.

Fork #1: Framework vs Authority

This first fork is simple, not easy: do you help customers decide, or do you decide for them?

The Boundary Control Question

This fork is about ownership across three interfaces:

Product interface: Who owns the ship/no-ship decision? The user interpreting scores, or Patronus making a pass/fail call?

Org interface: Does Patronus build a services organization to carry deployment responsibility?

Data interface: Does Patronus need customer data and context access to “certify,” shifting custody expectations? Authority also tends to expand data access expectations, pulling the company into customer-specific context that software alone can’t abstract away.

Patronus launched as framework. Customers run tests, see scores, decide. Patronus provides measurement; customers own judgment.

In adjacent categories, this boundary appears during first production rollouts, when enterprises ask: “Can you approve this for production?”

At that point, two paths diverge:

Path A: Framework (Tools, Not Judgment)

Platform provides scores, benchmarks, tests

Users interpret results and make decisions

Marketing: “Evaluate using Patronus”

Liability stays with customer

Revenue model: platform fees, API calls, usage-based

Path B: Authority (Judgment Ownership)

Platform provides attestation or certification (explicit pass/fail)

Patronus takes responsibility for deployment readiness

Marketing: “Approved by Patronus”, “Patronus-certified”

Liability shifts to vendor

Revenue model: assessment fees, certification, professional services

Teams tell themselves it’s temporary. The org chart usually disagrees.

The Mechanism: Why Authority Creates Services Gravity

The causal chain:

Authority creates liability — Customer: “You said this was safe.” Incident occurs. Customer: “You certified this. What happened?”

Liability requires context — Generic benchmarks aren’t enough. Need specific use case understanding, customer data review, edge case interpretation.

Context requires humans — Can’t automate “is this safe for THIS use case?” Each customer’s “safe” differs. Regulatory requirements vary. Interpretation = expertise = humans.

Humans in loop = services model — Each approval requires review time. Can’t scale like API calls. Margins compress. Becomes professional services with software wrapper.

The internal change: The first time you approve a deployment, you’re hiring for delivery before admitting it. The second time, you’re writing approval playbooks. The third time, you’re negotiating liability language in every enterprise contract. What starts as “helping a strategic customer” becomes the operating model.

Why this matters: Authority looks like premium positioning but creates operational model that can’t scale at software economics.

The counter-case: Automation + contractual disclaimers that keep judgment from becoming labor. The check-back tests whether Patronus can do that. Most teams think they can segment this boundary. In practice, segmentation tends to collapse once “approval” exists as an upsell.

Evidence: Evaluation-Adjacent Patterns

I analyzed companies in evaluation-adjacent spaces to see if this pattern holds.

Datadog (Infrastructure Monitoring) — Framework

Positioning: Measurement with customer-owned thresholds. Provides monitoring tools and dashboards; customers set their own alerts and deployment decisions. Datadog doesn’t approve or block deployments.

Business Model: Platform fees based on hosts/metrics, self-service for most customers, API-first pricing.

Outcome: IPO 2019, public-scale platform outcome. Platform economics maintained.

Key Insight: Infrastructure monitoring COULD take authority (”we approve your deployment”) but explicitly doesn’t. Framework positioning preserved scalability.

Veracode (Security Testing) — Authority → Acquired

Positioning: Assessment with attestation pressure. Started as security testing tools, evolved to “security certification.” Enterprises wanted “Veracode approved” stamp—shifting final security decision partially to Veracode rather than keeping it purely customer-owned.

Business Model: Assessment-based pricing; each assessment requires human review; professional services became significant.

Outcome: 2017 acquisition by CA Technologies for approximately $614 million. Good outcome, but high-touch model limited scaling to platform trajectory.

Key Learning: Taking security authority = high-touch delivery. Can’t automate “this is secure enough” because liability is real.

The Segmentation Trap: Why “Both” Usually Collapses

A natural question: “Why not offer both? Framework for some customers, certification for others?”

The mechanism that makes segmentation unstable:

Sales incentives align to higher ACV — Once ANY customer buys “approval” (higher price), sales team will sell it everywhere

Product becomes de facto authority — Certification becomes the core offering; framework becomes entry point, not center. Engineering time follows: certification features get priority, services org gets headcount.

Customer expectations converge — Customers who bought framework demand same treatment: “Why can’t we get certified too?”

This is usually justified as “meeting customers where they are,” which is true—briefly. Clean segmentation requires ironclad governance most companies can’t maintain. Once authority exists as an option, it often becomes the default.

Patronus’s Current Position

Patronus is showing clear framework signals as of late 2025:

Research Credibility (Framework Signals):

FinanceBench (November 16, 2023): Financial QA benchmark with 10,231 questions. Released as research, not certification service. According to the FinanceBench paper, results showed poor performance for state-of-the-art models. Framework positioning: “here’s how to measure” not “we approve your model.”

Lynx (July 11, 2024): Open-source hallucination detection model released with Apache 2.0 license. Patronus describes it as achieving state-of-the-art performance. Framework: community can use without Patronus approval.

Glider (December 19, 2024): Patronus describes this as a 3.8B parameter judge/evaluation model released as open source. Framework: democratizing evaluation, not controlling it.

Platform Language:

From Series A announcement (May 22, 2024): “Using proprietary AI, the platform enables enterprise development teams to score model performance, generate adversarial test cases, benchmark models.”

Key phrase: “enables teams to score” — not “we score for you” or “we certify.”

Customer Positioning:

Patronus’s website currently says “Our customers include OpenAI, HP, and Pearson.” Additional customers mentioned in announcements and case studies include Nova AI, Emergence AI, Weaviate, Etsy, Gamma, Hospitable.com, Algomo. Language in case studies: “uses Patronus AI to evaluate”, “leverages Patronus for evaluation” — not “approved by Patronus.”

No authority signals yet in public positioning:

No “Patronus-certified” badge or seal

No “approved for production” service offering

No language about deployment responsibility

No regulatory certification partnerships announced

This doesn’t rule out approval-heavy deals; it just means they haven’t surfaced publicly.

Downstream Pressure: Gatekeeping (If Authority Accepted)

This downstream pressure is conditional on Fork #1. If Patronus maintains framework positioning, they likely stay assistive. If they accept authority, pressure to become a gatekeeper increases dramatically.

Assistive (Advisory): Provides scores and recommendations; user makes final deployment decision. Scalable: Scores are automated. Gatekeeper (Blocking): Blocks deployments below threshold; Patronus makes pass/fail determination. Not scalable: Each edge case requires human review.

How it escalates: Authority accepted → Incident despite approval → Pressure to prevent future incidents → Introduce pass/fail gates → Gatekeeper role solidified, nearly impossible to walk back.

Downstream Manifestation: Services Trap v2 (If Authority + Gatekeeping)

Over the next 12-24 months (enterprise rollout + renewal cycles), the business model tends to lock in: platform with software economics, or services-heavy delivery with compressed margins.

The Distinction:

Traditional Services Trap (Pattern #1): Customer requests custom integration → Engineering diverted → Services revenue grows, margins compress. About CUSTOMIZATION (code branches).

Services Trap v2 (AI Evaluation): Customer requests deployment approval → Human judgment required → Professional services becomes material. About JUDGMENT (interpretation, liability). No configuration tool replaces human judgment. Much harder to escape.

Evidence from Adjacent Spaces:

Kount (Fraud Detection) — Fraud vendors often get pulled from scoring → decisioning. When customers want the vendor to own the block/allow decision boundary (not just provide risk scores), that authority shift often coincides with services org buildout and compressed margins relative to software-only models. Kount was acquired by Equifax in January 2021 for $640 million.

Three Scenarios

Base Case (65%): Framework → Platform Economics

What Happens:

Patronus maintains framework positioning through Series B and beyond. No certification product launch. No services organization build.

February 2027 Observable Signals:

Product/Packaging:

Platform fees, API tiers, usage-based pricing continue

No “certification” or “assessment” SKU

No “certified by” badge programs

Marketing: “evaluate”, “measure”, “test” (not “approve”, “certify”)

Organization:

Hiring: ML Engineers, Research Scientists, Platform Engineers

No: Consultants, Professional Services, Auditors, Assurance/Risk roles

Org chart: Head of Product, Head of Engineering, DevRel visible

No Head of Professional Services or Customer Success (delivery-focused)

Business Model:

Gross margins in software range (>75%)

Platform economics maintained

Financial-only investor base (no model provider strategics)

OSS Community:

Lynx/Glider adoption growing

Community contributions

No license restrictions or commercialization pressure

Outcome Trajectory: Platform-scale company analogous to Datadog’s infrastructure monitoring position. Every AI team uses the evaluation layer, but Patronus doesn’t own deployment decisions. Independent path to $2-5B+ outcome.

Implied Series B Pricing (ARR-based): Platform software economics with gross margins typically >75%. Expected range: ~12–20× ARR, driven by adoption depth and product surface area, not delivery scope. These ranges across all scenarios reflect recent infrastructure/SaaS vs services-heavy valuation patterns and are directional priors, not point estimates.

Alternative Case (20%): Framework + Strategic Validation

What Happens:

Same as Base Case (framework positioning maintained), but Series B includes non-conflicted strategic co-investor (AWS, GCP, or Azure at <5% ownership stake). Cloud partnership accelerates distribution without creating control or authority pressure.

February 2027 Observable Signals:

All Base Case signals (framework language, platform hiring, no certification SKU) PLUS:

Cloud marketplace listing (AWS/GCP/Azure)

Co-marketing with cloud provider

Integration depth (native monitoring integration, billing integration)

Strategic investor disclosed but minority position

Outcome Trajectory: Independent platform with cloud endorsement. Accelerated growth from partnership while preserving platform economics. Still path to $2-5B+ outcome but faster timeline.

Implied Series B Pricing (ARR-based): Multiple expansion from distribution leverage, not margin change. Expected range: ~18–28× ARR (upper end reflects category leadership signals), conditional on authority remaining absent and services immaterial.

Bear Case (15%): Authority → Services Gravity

What Happens:

Patronus accepts authority responsibility at Fork #1. Services organization builds to handle judgment-heavy delivery. Gross margins compress. Strategic acquisition follows.

February 2027 Observable Signals:

Product/Packaging:

“Certification” or “Assessment” SKU launched

“Certified by Patronus” or similar badge program

Marketing language: “approve”, “certify”, “validated by Patronus”

Deploy gate / CI blocking features released

Organization:

Hiring: Consultants, Implementation Engineers, Safety Specialists, Auditors

Professional Services Manager, Solutions Architects visible in LinkedIn/jobs

Org chart shows Head of Professional Services or Customer Success (delivery)

Engineering hiring slower than customer growth (services-heavy)

Business Model:

Gross margins <70% or declining trend

Professional services mentioned in Series B materials

Assessment/certification revenue growing faster than platform

Strategic investors (model providers or consultancies) in Series B

Customer Language:

Testimonials emphasize “certification” not “platform”

“Patronus approved our deployment” in case studies

Risk/compliance framing dominant

Outcome Trajectory: Strategic acquisition by consultancy (Accenture, Deloitte), AI safety specialist, or model provider at $400-800M range. Good outcome for early investors but compressed relative to platform potential. Valuation multiple 6-10x revenue (services compression) vs 15-20x (platform).

Implied Series B Pricing (ARR-based): Software-assisted services economics with gross margins drifting <70%. Expected range: ~6–12× ARR. Even with growth, multiples compress due to delivery risk and labor coupling.

Fig.1 Execution paths after the Ship/No-Ship question

OSS and Independence (Conditional Signals)

Open source strategy and investor independence matter—but only after the authority fork.

If Framework: OSS expands credibility and standards. Defensibility through adoption, not secrets.

If Authority: OSS becomes risky (giving away judgment methods). Pressure to restrict arises. But community already adopted open methods—tightening triggers backlash.

Current Structure: Series A led by Notable Capital (financial), with Lightspeed and Datadog (<10%, non-conflicted). No model provider strategics. No cloud platform strategics. Right structure for maintaining independence today.

Conditional on Fork #1: Framework → Generous OSS + Financial investors + Platform economics = Independent infrastructure. Authority → OSS tension + Strategic investors + Services economics = Strategic exit.

Signal Tracker & Quarterly Checkpoints

Authority pressure often surfaces first in hiring (building delivery capacity), then packaging (monetizing that capacity), then workflow (operationalizing authority). We track five signals quarterly through 2026.

Q2 2026 (April-June): Hiring Drift

What this tests: Whether authority pressure is being absorbed through organizational changes before product or marketing shifts.

Signals to watch:

First appearance of roles titled: Consultant, Implementation Engineer, Solutions Architect, Assurance/Risk Analyst, or any role implying customer-specific judgment

Delivery-oriented hiring becomes material (>20% of new hires in the quarter)

ANY Principal/Director-level delivery hire (signals strategic commitment, not just capacity)

Interpretation:

Framework holding: Hiring remains engineering- and research-heavy (ML Engineers, Research Scientists, Platform roles)

Authority pressure emerging: Even 1-2 delivery hires signal early services gravity; senior delivery hire is stronger signal

Q3 2026 (July-September): Packaging Boundary

What this tests: Whether authority is being monetized explicitly—even if framed as “optional” or “enterprise-only.”

Signals to watch:

Certification, Assessment, Validation, or Attestation SKU appears

Pricing tiers implying pass/fail outcomes (not just usage-based)

“Production-ready”, “deployment-approved”, or “certified by Patronus” language

Badge or seal program launched

Interpretation:

Framework holding: API tiers, usage-based pricing, no approval framing

Authority accepted: Any approval SKU crosses the boundary—even as premium tier

Q4 2026 (October-December): Workflow Control

What this tests: Whether Patronus is moving from advisory measurement into operational gatekeeping.

Signals to watch:

CI/CD blocking features released

Hard deploy gates tied to evaluation thresholds

Customer stories/case studies emphasizing prevention vs visibility

Integration docs showing pass/fail enforcement (not just alerting)

Interpretation:

Framework holding: Observability-only integrations (dashboards, alerts, recommendations)

Gatekeeper forming: Blocking capability implies de facto authority—even if “optional”

Checkpoint Roll-Up Logic

0 authority signals detected: Framework path highly likely (Base Case 65%)

1 authority signal detected: Drift phase—authority pressure exists but containable (Alternative Case possible)

2 authority signals detected: Authority path likely locked—reversal difficult (Bear Case probable)

3 authority signals detected: Services gravity effectively inevitable (Bear Case 85%+)

Important observability note: Teams can “talk framework” in marketing while quietly selling approval-heavy deals. Language is necessary but insufficient. Watch hiring patterns (Q2), product packaging (Q3), and workflow features (Q4) in parallel—those are harder to fake.

If you only track one thing: Packaging (Q3) + hiring titles (Q2). These are the hardest signals to spin and appear earliest in the sequence.

February 2027 Check-Back

Primary Falsifier

If Patronus launches a certification product but there are still no services/delivery roles (consultant, auditor, implementation consultant, solutions architect, professional services) in job postings or LinkedIn titles, the services-gravity mechanism is wrong.

This is the cleanest test. The hypothesis is that authority → judgment ownership → human-in-loop → services org. If authority doesn’t produce observable services/delivery hiring, the causal chain breaks.

Check-Back Scoring

Score these 5 questions in February 2027:

□ Certification SKU launched?

□ Services/delivery roles visible in job postings over 6 months?

□ “Approval” or “certified” language on website/blog?

□ Deployment gate / CI blocking product released?

□ Professional services mentioned in Series B materials?

Scoring:

0-1 Yes → Framework path confirmed

2-3 Yes → Hybrid/unclear (watch for drift)

4-5 Yes → Authority path confirmed

What to Watch:

Language signals (softer): Marketing copy, blog posts, press releases, customer testimonials, fundraising narrative

Structural signals (harder): Hiring patterns via LinkedIn, product SKUs and pricing pages, integration types (observability vs blocking), org chart changes (services team formation)

If language and structure diverge, trust structure. Companies optimize messaging but can’t fake organizational reality.

References

Fork #1: Framework vs Authority

[1] Datadog. (2024). “Datadog Investor Relations.”

https://investors.datadoghq.com/

[2] CA Technologies. (2017). “CA Technologies announces agreement to acquire Veracode.” SEC Form 8-K, Exhibit 99.1.

https://www.sec.gov/Archives/edgar/data/356028/000119312517071784/d349815dex991.htm

Downstream

[3] Equifax. (2021). “Why the Equifax Acquisition of Kount is a Big Deal.”

https://www.equifax.com/newsroom/all-news/-/story/why-the-equifax-acquisition-of-kount-is-a-big-deal/

Patronus AI

[4] PRNewswire. (2023). “Patronus AI Launches Out of Stealth to Help Enterprises Deploy Large Language Models Safely.”

https://www.prnewswire.com/news-releases/patronus-ai-launches-out-of-stealth-to-help-enterprises-deploy-large-language-models-safely-301927641.html

[5] Patronus AI. (2024). “Announcing our $17M Series A.”

https://www.patronus.ai/blog/announcing-our-17-million-series-a

[6] TechCrunch. (2024). “Patronus AI is off to a magical start as LLM governance tool gains traction.”

https://techcrunch.com/2024/05/22/patronus-ai-is-off-to-a-magical-start-as-llm-governance-tool-gains-traction/

[7] Patronus AI. (2023). “Patronus AI launches FinanceBench, the industry’s first benchmark for LLM performance on financial questions.”

https://www.patronus.ai/announcements/patronus-ai-launches-financebench-the-industrys-first-benchmark-for-llm-performance-on-financial-questions

[8] Patronus AI. (2024). “Lynx: State-of-the-Art Open Source Hallucination Detection Model.”

https://www.patronus.ai/blog/lynx-state-of-the-art-open-source-hallucination-detection-model

[9] Patronus AI. (2024). “GLIDER: State-of-the-Art SLM Judge.”

https://www.patronus.ai/blog/glider-state-of-the-art-slm-judge

[10] Patronus AI. (2024). “Patronus AI | Powerful AI Evaluation and Optimization.” (Customer listing: “Our customers include OpenAI, HP, and Pearson”)

https://www.patronus.ai/

[10a] Patronus AI. (2024). “Datasets & Benchmarks for AI Agents.” (Backup source, same customer listing)

https://www.patronus.ai/datasets-benchmarks-for-ai-agents

[11] GitHub. (2024). “patronus-ai/financebench.”

https://github.com/patronus-ai/financebench

[12] GitHub. (2024). “patronus-ai/glider.”

https://github.com/patronus-ai/glider

[13] arXiv. (2023). “FinanceBench: A New Benchmark for Financial Question Answering.”

https://arxiv.org/abs/2311.11944

[14] Patronus AI. (2024). “Press.” (Confirmation of Lynx, GLIDER, FinanceBench releases)

https://www.patronus.ai/press

[15] Patronus AI. (2025). “Introducing Generative Simulators: Autonomously Scaling Environments for Agents.” December 17, 2025.

https://www.patronus.ai/blog/introducing-generative-simulators

[16] PRNewswire. (2025). “Patronus AI Introduces Generative Simulators, Outlining Adaptive ‘Practice Worlds’ for the Next Wave of AI Agents.” December 17, 2025.

https://www.prnewswire.com/news-releases/patronus-ai-introduces-generative-simulators-outlining-adaptive-practice-worlds-for-the-next-wave-of-ai-agents-302644731.html

This is pattern research testing whether execution forks are predictable from public signals. It is not investment advice. Predictions will be evaluated February 2027.

Pattern tracking:

Pattern #1: Research Grid (Month 11) - Services trap prediction - Check-back April 2026

Pattern #2: DatologyAI (Month 25) - Services trap verification - Check-back October 2026

Pattern #3: Chai Discovery (Month 20) - Compound fork effects - Check-back October 2026

Pattern #4 Flux (Month 29) - GTM lock-in - Check back Jan 2027