Operator Hypotheses #4: Flux

Testing how early data custody decisions constrain GTM strategy

Started: January 2026 (Month 29 post-seed)

Seed: $5.95M (August 2, 2023)

Investors: Glasswing Ventures (lead), Runtime Ventures, True Ventures

Final Check: January 2027 (Month 41)

About This Series

Operator Hypotheses examines whether early execution forks in startups are predictable from public signals. Each entry follows one company for 12 months to test whether its trajectory aligns with structural constraints identified months—or years—earlier.

Pattern #4 looks at a compound fork inside AI developer tools:

How an early data custody decision quietly constrains GTM strategy long before the team realizes it.

See Pattern #0 for the full framework.

The Bottleneck That Moved

By 2025, AI coding assistance had become standard. GitHub’s research showed that developers in controlled studies completed tasks 55% faster with AI assistance.¹ Tens of millions of developers encountered AI-augmented workflows through GitHub, IDE integrations, and internal rollouts.

Velocity increased everywhere.

But understanding did not.

Code volume surged. Pull requests multiplied. Engineering leaders suddenly faced codebases evolving faster than they could read them, let alone reason about architectural drift, risk, or quality.

Flux positioned itself precisely at this new constraint:

AI-native engineering intelligence for leaders managing AI-accelerated codebases.

Somewhere between Month 9 and Month 24, two quiet decisions shaped their trajectory. The first was a conscious choice made inside early enterprise deals. The second wasn’t a choice at all - it was a consequence that closed off an entire GTM path without anyone needing to say “no.”

Pattern #4 asks whether you can see that happening from the outside.

Why Flux Is the Right Company to Study

When AI collapsed the bottleneck on code generation, it created a new one:

leadership visibility.

Teams could ship faster, but leaders could no longer keep up with what was being shipped.

GitClear’s 2024 code analysis found that AI-generated code exhibited 2–3x more duplication than human-written code.² Architectural complexity rose. Review throughput lagged behind generation throughput.

Flux does not sell “AI coding assistance.” It sells understanding to the people accountable for code quality and system stability.

That focus makes the founding team’s background relevant:

Ted Julian, CEO: four enterprise software exits (IBM, Tektronix, Symantec) and SVP Product at Devo through Series E

Adrianna Gugel, CPO: repeated 0→1 product builder

Aaron Beals, CTO: engineering leader in enterprise SaaS

This is a team built to sell to VP Engineering and CTO buyers.

And the absence is just as telling:

no DevRel DNA.

No community builders. No open-source maintainers. No developer evangelists.

That gap becomes important once the architectural constraint shows up.

Where the Fork Quietly Appeared

We can’t see Flux’s internal discussions. But we can reconstruct the likely dynamics from standard enterprise infosec patterns and today’s product surface.

This reconstruction is based on typical enterprise sequences—nothing here is insider knowledge.

Imagine the team around Month 10.

The core analysis engine works. Prospects are interested. Enterprise buyers run early pilots. Then security reviews begin, and the same set of questions appears in nearly every devtools sale that touches source code:

Where does our code get analyzed?

Does anyone outside our organization see it?

Does your AI learn from our patterns?

Do improvements trained on our data benefit your other customers?

And here is the fork.

Path A: Cross-Customer Learning

AI improves for everyone as usage grows

True network effects

Strong free-tier economics

Natural path to Product-Led Growth (PLG)

Path B: Customer-Siloed Analysis

No cross-learning

Every customer isolated

AI does not benefit from aggregate patterns

Free tier becomes expensive and non-strategic

Shifts the company toward enterprise motion

Option A creates a compounding product.

Option B creates a compliant one.

And in early enterprise cycles, compliance usually wins.

Once Flux committed to strict isolation (if they did), the consequences wouldn’t show up for months, but they would show up.

What the Outside Shows at Month 29

We cannot see Flux’s data flows.

But we can see enough to infer which side of the fork they likely landed on.

1. The sandbox uses demo data, not real repositories

https://sandbox.trial1.askflux.ai

A polished, frictionless demo—but not a self-serve trial.

2. No self-serve onboarding path

There is no “Sign up → Connect repo → Start analyzing” flow.

3. Messaging reinforces isolation

“Your repo.” “Your codebase.”

No language about models improving across customers.

4. The EULA allows aggregation—but the product doesn’t show it

“Company reserves the right to use data and data analytics to improve the Services…”³

Legal permission ≠ architectural reality.

No public evidence this is happening.

5. The persona is enterprise leadership

VP Eng, CTO, engineering managers - not developers.

6. The demo CTA exists but is hidden

https://askflux.ai/requestdemo

Present, but not surfaced as a primary motion.

Inference: Customer-siloed architecture is the most likely explanation.

Confidence: 60% (based on product and GTM signals, not internal proof).

Why This Fork Collapses PLG

If Flux chose siloed analysis, another fork resolves itself automatically.

PLG works only if:

The product improves as more people use it (network effects), or

Free users cost almost nothing

Siloed analysis has neither.

Every repo analyzed is compute-heavy.

There is no shared learning.

No network effect offsets the cost.

You can offer a demo.

You cannot offer a meaningful free tier with real code.

The product can feel PLG.

The business model cannot be PLG.

The Demo That Reveals the Constraint

Flux’s sandbox is polished, fast and frictionless.

But it is not PLG.

What’s missing:

Repo connection

Pricing tiers

Team expansion

Self-serve billing

Free → paid funnel

This is demo mechanics wrapped around enterprise economics.

The lock is visible if you know where to look.

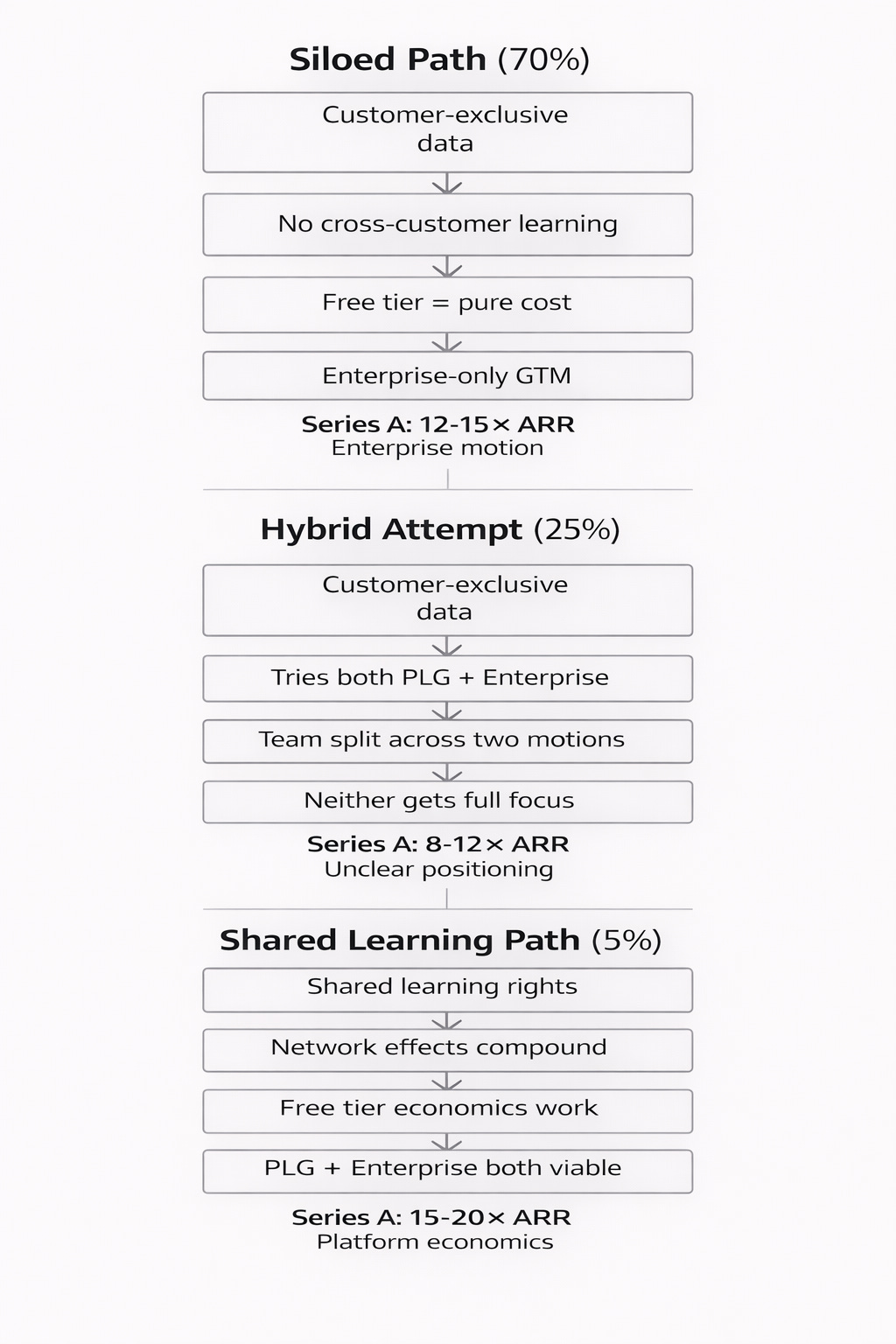

Fig 1. Execution Paths After the Data Custody Decision

Observable Signals & Checkpoints

Checkpoint 1: Q1–Q2 2026 (Months 29–35)

Signals to watch:

VP Sales or senior enterprise hire

VPC / on-prem deployment documentation

SOC2, SSO, audit logs appear

Pricing page launches

Sandbox de-emphasized in favor of demos

Interpretation:

Enterprise signals → base case strengthening

DevRel or growth hires → PLG attempt

Neither → unresolved tension

Checkpoint 2: Q3 2026 (Months 36–39)

Signals to watch:

Fortune 500 logos on homepage

Case studies with quantified ROI

Analyst mentions (Gartner / Forrester)

Developer testimonials or community emergence

Interpretation:

Enterprise proof → enterprise GTM consolidating

Developer-led adoption → hybrid or PLG path forming

Final Check: Q4 2026 to Q1 2027 (Months 40–42)

Signals to watch:

Series A announcement

Valuation multiple

Lead investor profile

Press narrative

Interpretation:

12–15× ARR → enterprise motion confirmed

15–20× ARR → PLG success

8–12× ARR → hybrid or unclear positioning

What Happens Next: Three Scenarios

Base Case (70%): Enterprise GTM Consolidates

Q1–Q2 2026:

VP Sales hire appears, VPC/on-prem deployment docs published, SOC2 certification completed

Q3 2026:

First enterprise case study published, Fortune 500 logos appear on homepage, /requestdemo becomes primary CTA

Q4 2026–Q1 2027 (Series A):

ARR: ~$800K–$1.2M

Valuation: 12–15× ARR

Lead investor: Enterprise SaaS specialist

Narrative: “AI-native engineering intelligence for large teams”

Hybrid Attempt (25%): Two Motions, One Team

Q1–Q2 2026:

VP Sales AND DevRel/growth hire, pricing page launches, mixed content (developer tutorials + executive insights)

Q3 2026:

Both enterprise deployments and self-serve trials active, messaging tries to serve both personas

Q4 2026–Q1 2027 (Series A):

ARR: $600K–$1M

Valuation: 8–12× ARR

Lead investor: Generalist or growth-stage

Narrative: Less crisp positioning

Operationally demanding at $5.95M seed scale

PLG Breakthrough (5%): The Falsification Case

Q1–Q2 2026:

Self-serve repo connection launches, real free tier with meaningful code analysis, pricing page with transparent tiers

Q3 2026:

Developer testimonials spread organically, IDE integration announced, bottom-up adoption visible

Q4 2026–Q1 2027 (Series A):

ARR: $1M+

Valuation: 15–20× ARR

Lead investor: PLG-focused firm

Narrative: “Developer-first engineering intelligence”

If this emerges, the core thesis is falsified.

Falsification Criteria

PLG succeeds anyway → economics assumption wrong

Cross-learning revealed publicly → inference wrong

Hybrid scales cleanly → complexity overstated

Incumbents absorb category → selection thesis incomplete

What This Tests

Two structural claims:

Did early data custody choices quietly remove PLG as an option?

Current inference: Yes (60% confidence)Can GTM outcomes be inferred before the company commits?

Current inference: Enterprise gravity is strong (70% confidence)

If the base case holds, it reinforces a core Operator Hypotheses claim:

Architectural decisions made under early enterprise pressure can determine GTM destiny long before anyone realizes the door has closed.

Final Check: January 2027 (Month 41)

By then, the path will be visible.

Either the hypothesis holds - or it breaks.

Both outcomes are valuable.

References

Peng et al. (2023). The Impact of AI on Developer Productivity. https://arxiv.org/abs/2302.06590

GitClear (2024). Coding on Copilot. https://www.gitclear.com/coding_on_copilot_data_shows_ais_downward_pressure_on_code_quality

Flux (2023). Subscription Services Agreement. https://askflux.ai/eula

Flux Blog. https://askflux.ai/blog

Flux Sandbox. https://sandbox.trial1.askflux.ai

Flux Product. https://askflux.ai/product

Analysis Date: January 2026

Next Review: January 2027

Not investment advice. This is live pattern research.

Pattern tracking:

Pattern #1: Research Grid (Month 11) - Services trap prediction - Check-back April 2026

Pattern #2: DatologyAI (Month 25) - Services trap verification - Check-back October 2026

Pattern #3: Chai Discovery (Month 20) - Compound fork effects - Check-back October 2026